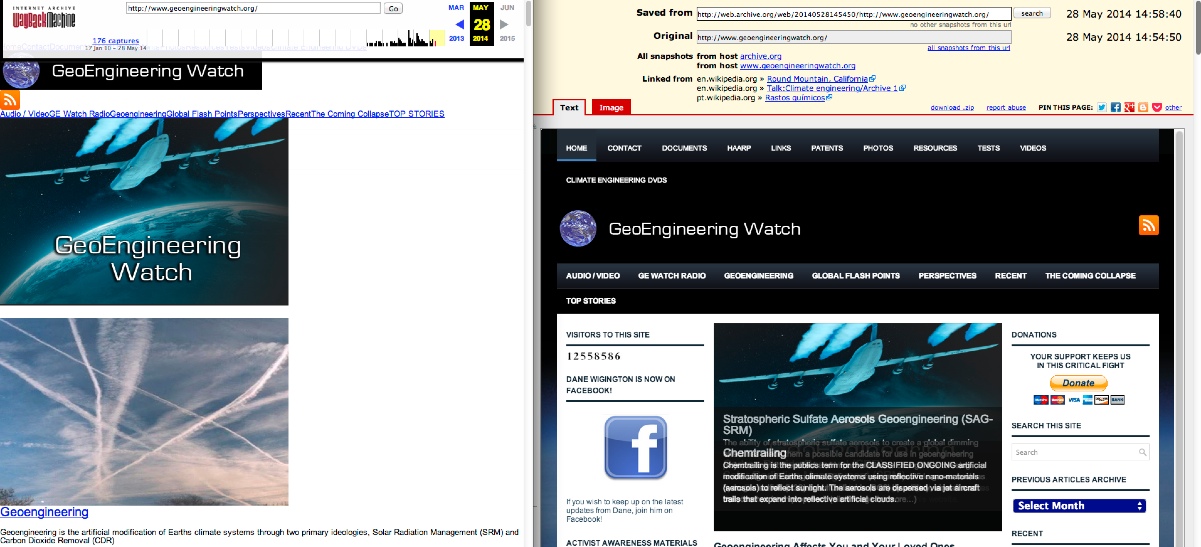

The most common web archiving technique uses web crawlers to automate the process of collecting web pages. See also: List of Web archiving initiatives Remote harvesting This metadata is useful in establishing authenticity and provenance of the archived collection.

WEB ARCHIVE ARCHIVE

They also archive metadata about the collected resources such as access time, MIME type, and content length. Web archivists generally archive various types of web content including HTML web pages, style sheets, JavaScript, images, and video. For example, in 2017, the United States Department of Justice affirmed that the government treats the President’s tweets as official statements. ĭespite the fact that there is no centralized responsibility for its preservation, web content is rapidly becoming the official record.

This project developed and released many open source tools, such as "rich media capturing, temporal coherence analysis, spam assessment, and terminology evolution detection." The data from the foundation is now housed by the Internet Archive, but not currently publicly accessible. The now-defunct Internet Memory Foundation was founded in 2004 and founded by the European Commission in order to archive the web in Europe. The International Internet Preservation Consortium (IIPC), established in 2003, has facilitated international collaboration in developing standards and open source tools for the creation of web archives. įrom 2001 to 2010, the International Web Archiving Workshop (IWAW) provided a platform to share experiences and exchange ideas. Other projects launched around the same time included Australia's Pandora and Tasmanian web archives and Sweden's Kulturarw3. The Internet Archive also developed many of its own tools for collecting and storing its data, including PetaBox for storing the large amounts of data efficiently and safely, and Heritrix, a web crawler developed in conjunction with the Nordic national libraries. As of 2018, the Internet Archive was home to 40 petabytes of data.

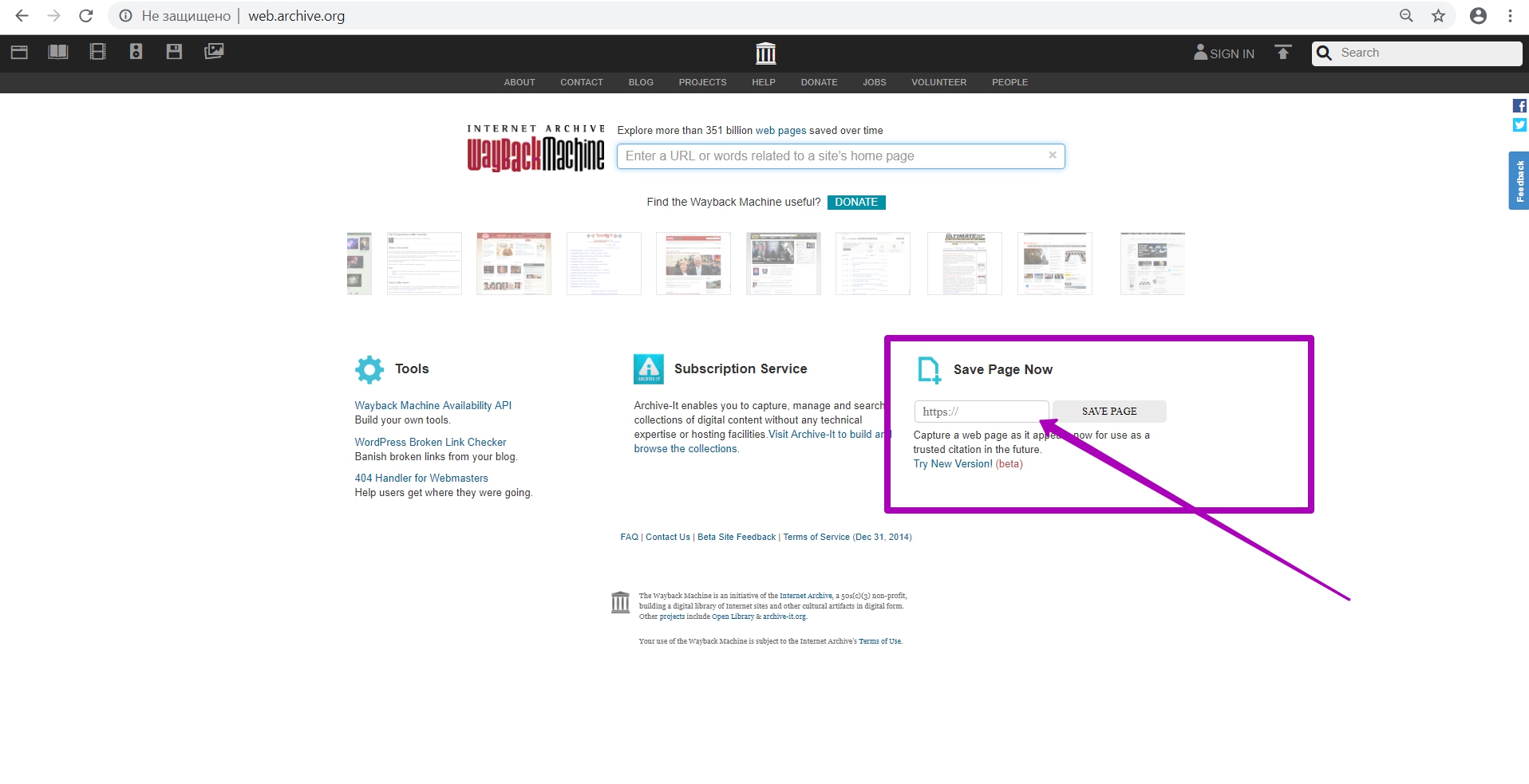

The Internet Archive released its own search engine for viewing archived web content, the Wayback Machine, in 2001. While curation and organization of the web has been prevalent since the mid- to late-1990s, one of the first large-scale web archiving project was the Internet Archive, a non-profit organization created by Brewster Kahle in 1996.

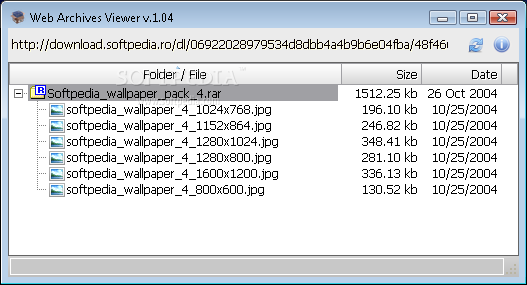

WEB ARCHIVE SOFTWARE

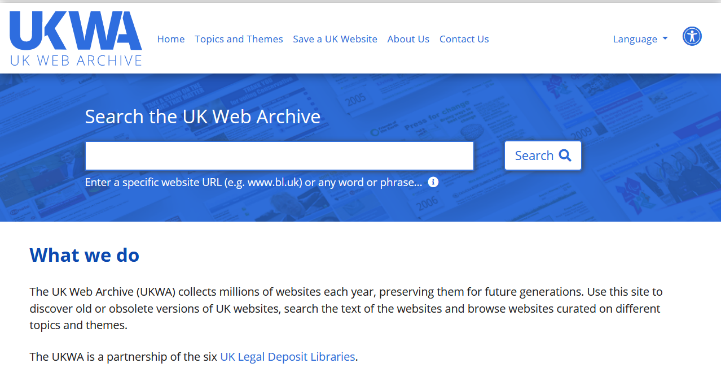

National libraries, national archives and various consortia of organizations are also involved in archiving culturally important Web content.Ĭommercial web archiving software and services are also available to organizations who need to archive their own web content for corporate heritage, regulatory, or legal purposes. The growing portion of human culture created and recorded on the web makes it inevitable that more and more libraries and archives will have to face the challenges of web archiving.

The largest web archiving organization based on a bulk crawling approach is the Wayback Machine, which strives to maintain an archive of the entire Web. Web archivists typically employ web crawlers for automated capture due to the massive size and amount of information on the Web. Web archiving is the process of collecting portions of the World Wide Web to ensure the information is preserved in an archive for future researchers, historians, and the public. For other uses, see Web archive (disambiguation).